The Magic Fridge: Data Warehousing on a Budget

Part 1 of the Lean Data Stack series, in which I look at the Modern Data Stack through the lens of a business on a tight budget

A few weeks ago, I watched Boiling Point, a single-shot movie starring Stephen Graham as a beleaguered head chef in a dysfunctional restaurant. Without wishing to spoil it, most of what you could imagine going wrong in a restaurant does go wrong, all within an anxiety-inducing ninety minutes.

As somebody with an annoying tendency to draw analogies between Data functions and restaurants, this movie was overstimulating. Missing supplies of beef and lamb were missing data pipelines. The unkept paperwork resulting in a food hygiene rating downgrade was like a data model built without documentation. The list goes on, but contains too many spoilers.

It’s surely not just operational mishaps that keep restaurateurs awake at night. In a world of skyrocketing energy prices, the cost of running commercial fridges, freezers and ovens must be substantial. While, to some extent, this knowledge may make you more sympathetic the next time the bill arrives, I mention this purely to serve my analogy between kitchen apparatus and data warehouses.

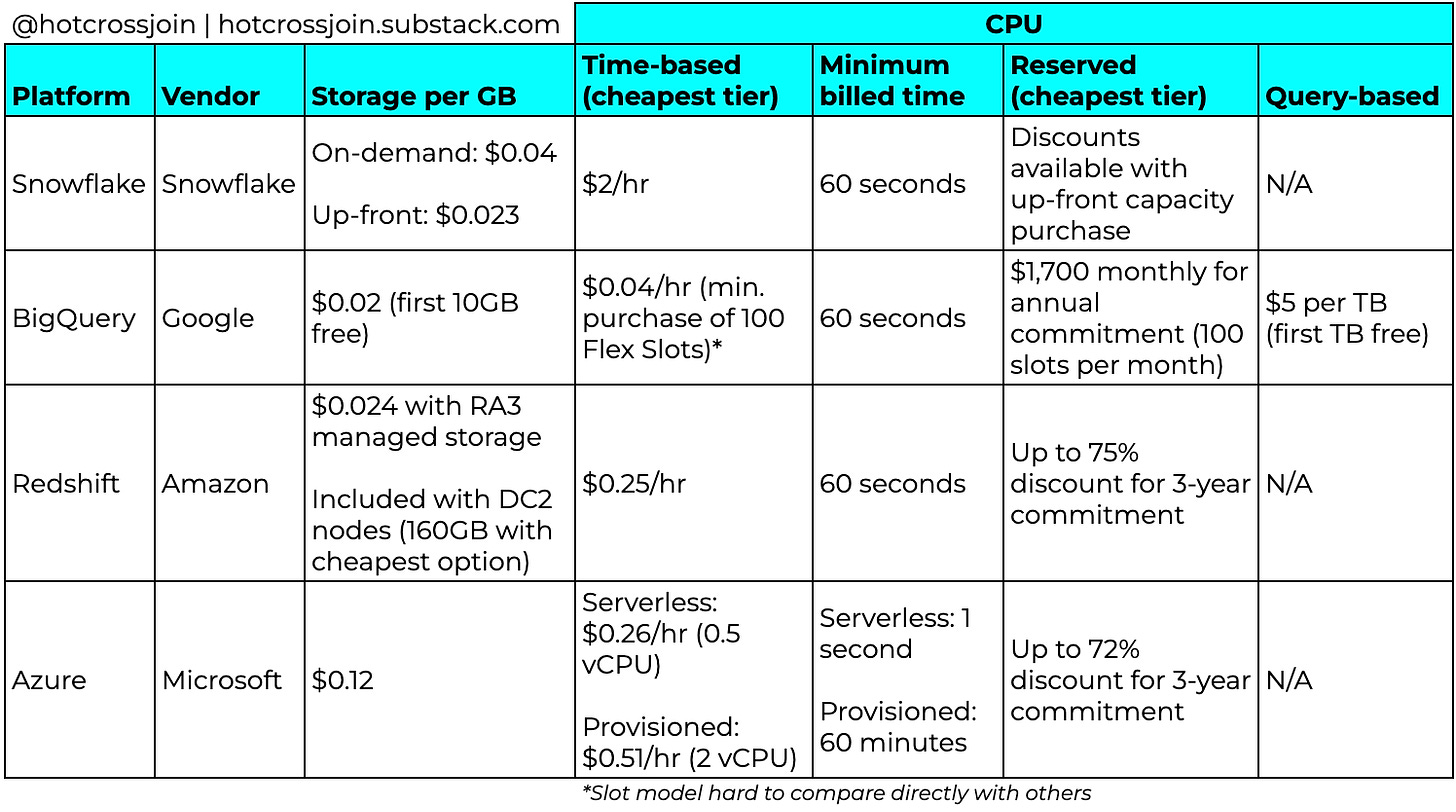

The costs of modern cloud data warehouses can be split into two categories: storage and compute.

Storage is the cost of storing your data in a warehouse, ready for querying. This is your fridges and freezers. It’s safe to assume that you will always need some amount of storage.

Compute is the cost of querying your data, turning raw ingredients into something that’s ready for consumption. This is your ovens and hobs. Unless your restaurant operates 24/7, you won’t be using this constantly, but when you do, it can be expensive to run.

Determining the cost effectiveness of a data warehouse means understanding each of those costs, and how they fit with your expected usage pattern.

Unfortunately, it’s not as simple as just looking at a few pricing pages side-by-side and drawing a comparison. Each of the four leading platforms has its own pricing dynamics.

Likewise, you can’t evaluate data warehousing platforms in isolation from your existing technology. While Snowflake can be deployed on any of Google Cloud Platform (GCP), Amazon Web Services (AWS) or Azure, the other three platforms are all specific to the ecosystems of GCP, AWS and Azure respectively, and integrate best with other services within those ecosystems. For example, a business already using AWS for its core product infrastructure is likely to give most consideration to Redshift and Snowflake.1

So, selecting a data warehouse is not a no-brainer, and that’s before we even consider factors like performance and scalability, which I won’t cover here.

Storage: The Magic Fridge

While a restaurant’s stock levels will naturally ebb and flow, its storage capacity remains constant. Fridges don’t grow and shrink to fit the volume of food to be stored. Before the days of the cloud, this is how on-premise data storage worked (and still does, in many businesses). Storing data in the cloud allows capacity to be auto-scaled up or down depending on how much data you need to store.

You can, therefore, think of cloud storage as a kind of magic fridge. Put a salmon in the fridge, and your fridge grows just enough to accommodate that salmon. Remove a salmon, and the fridge shrinks so that you don’t waste energy cooling that dead space. This eliminates up-front investment in redundant capacity, and saves the hassle of managing capacity as you consume and replenish your salmon inventory.

Snowflake: $0.04 per GB per month (or $0.023 if paid up front)

BigQuery: $0.02 per GB per month (first 10GB is free)

Redshift (with managed storage): $0.024 per GB per month

Azure: $0.12 per GB per month

BigQuery’s 10GB free tier isn’t as exciting as it may sound - it’ll only save you $0.20 per month - but its per-unit price is the lowest. Throw as many salmon into that fridge as you like.

Compute: Don’t burn down the restaurant

Compute is where it gets interesting. Imagine that there are two ways in which you can pay for the gas that powers the hobs in your restaurant. You can either: a) pay in proportion to the amount of time your hobs are ignited; or b) pay in proportion to the amount of food you cook on the hobs.

The former is analogous to the time-based pricing models of Redshift, Snowflake and Azure, while the latter is closer to BigQuery’s on-demand pricing model.

Time-based Pricing

Time-based costs depend on how many CPUs are running, and for how long. All three time-based providers also have multiple compute tiers, which cost more for higher-memory CPUs.

The benefit of a time-based model is that your cost is predictable. You pay for the time your compute resources are available, not for what you consume. With a query-based model, data consumption is unbounded, unless you enforce arbitrary limits. In a short space of time, you could run up a huge bill with a few huge queries. Under a time-based model, this won’t happen unless you scale up your compute resources.

Likewise, if you have a constant need to access the data, or a highly predictable time range when all the querying happens, time-based models can be efficient, as you will generally get good utilisation out of the compute resources that you pay for.

Just remember to turn off the hob when you’re done. Azure’s provisioned model and Snowflake both allow you to auto-pause your CPUs after a period of inactivity, and auto-resume the next time a query is run. Snowflake is more generous than Azure here, as Snowflake has a minimum active period of one minute, compared to one hour for Azure. This means that, if you auto-resume your Azure CPU, you will pay for at least one hour, even if you only need to run a single query. On the other hand, with the cheapest CPU options, Snowflake ($2/hr) charges 4x what Azure ($0.51) charges per hour, so Snowflake doesn’t necessarily work out cheaper2.

Redshift doesn’t appear to offer this feature out-of-the-box3. All you can do is set a pause schedule, which lets you pause your CPU and save some cost during predetermined windows, but isn’t ideal if people want to look at dashboards at unexpected times.

Reserved Instances

If your needs are predictable - which at a start-up they’re probably not - you can save a lot of cash with Redshift’s Reserved Instance pricing. In exchange for committing to a 1-3 year contract, you can get discounts of between 20%-75% compared to on-demand pricing. If you have a long cash runway and predictable usage over a long period of time, this is a big tick for Redshift, as well as Azure which offers a similar discounting model. Sadly though, I doubt that you have either of those things. Almost nobody in a VC-backed business right now wants to commit to an up-front annual (let alone triennial) software bill, regardless of the savings.

Query-based Pricing

Finally, what if you only want to pay for what you cook? On the face of it, BigQuery’s on-demand pricing is the most satisfying. Run a query; pay for a query. Don’t pay for idle time. For some businesses, this will be the cheapest model. If BigQuery isn’t supporting anything that’s in constant use, and the queries are intermittent and low in volume, it may be cheaper than a time-based model, where you’ll overpay for unused CPU resource.4

BigQuery charges $5 per TB of querying, also giving you the first TB per month for free. For a small business, this may result in zero cost. For the first six months or so after implementing BigQuery at Impala, we paid nothing thanks to the freebies.

BigQuery also offers a flat-rate model, whereby you pay for “slots” (virtual CPUs) on one of three plans:

Flex slots: Similar to Snowflake’s model, you pay for a minimum of 60 seconds of CPU time. Best for short-term, spiky workloads.

Monthly: Minimum of $2,000 per month

Annual: Minimum of $1,700 per month

This gives a mature business with predictable workloads a more cost-effective alternative to on-demand pricing, however the monthly and annual plans both appear excessive to me, as somebody whose entire monthly (on-demand) BigQuery bill is usually below $500, despite it being at the centre of the company’s reporting and analytics.

So, for me, the cost question comes down to predictability and runway. Do you know how long the hobs are going to need to be on for, and will you use them heavily throughout that time? If so, a time-based model is probably the better fit. Do you have the capital to pay for that hob capacity up-front, without jeopardising your runway? If so, consider purchasing a reserved instance at a discount. If the answer to the first question was no, BigQuery’s on-demand pricing should work out cheapest.

That’s not to say that you can’t, for example, implement BigQuery in a business that uses AWS. I have done this before and it works just fine.

Query performance across these two options may not be like-for-like; I’m purely looking at cost. You may, for instance, need to pay more on Azure than the minimum $0.51/hour to match Snowflake’s performance.

You could probably build auto-pause/resume logic using a Lambda function.

It’s worth also mentioning Azure’s serverless model, which is time-based rather than query-based, but is billed by the second. So, in practice, it broadly mimics BigQuery as you pay for virtually no idle time. For the same CPU resources though, it works out four times more expensive than Azure’s provisioned model.